Lesson

1. When Machines Decipher Worldview: Artificial Neural Networks (2012)

Dr. Son Pham

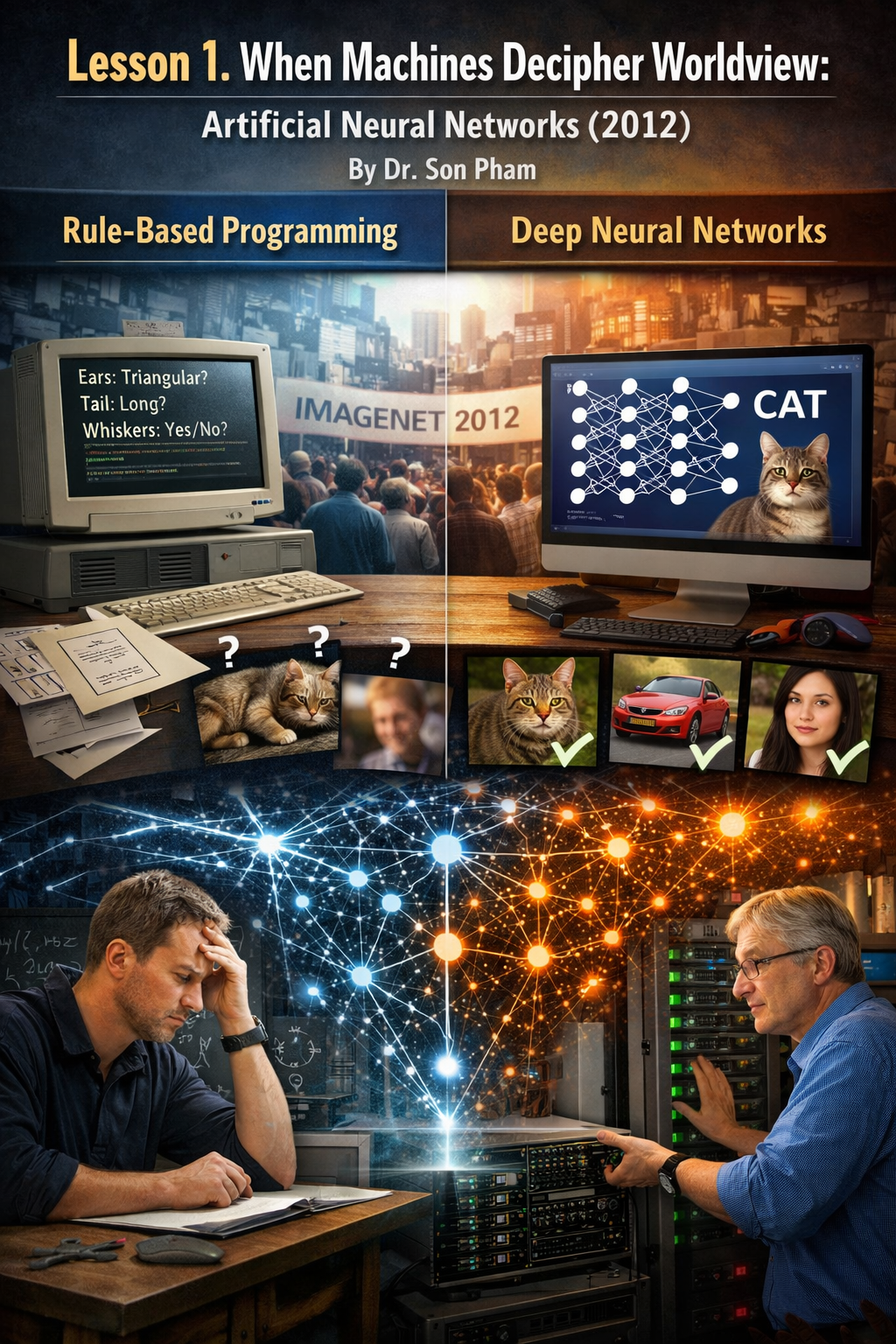

Before

2012, the history of information technology used to fall into an interesting

paradox. Computers can perform billions of calculations per second, simulate

the global climate and even pilot space probes, but struggle with a seemingly

simple task: look at a photo and say what it is. A child can instantly

recognize a cat, a car, or a loved one's face, while a computer is embarrassed

by such obvious things.

The

reason is not in computing power, but in the way humans teach machines to

understand the world. For decades, computer systems have been built on a linear

programming model: humans observe phenomena, write down rules, and then ask

machines to apply those rules. This approach is very effective for obvious

logical problems such as calculations or data management, but it proves weak in

the face of the volatile world of images.

The

turning point came when scientists began to shift from the mindset of

"teaching the machine each rule" to "letting the machine learn

from the data on its own". In 2012, the event at the ImageNet Large Scale

Visual Recognition Challenge proved that deep neural networks can go far beyond

any previous method. From that moment, artificial intelligence entered a new

era.

The

impasse of Rule based Programming thinking

In

the early stages of artificial intelligence, software engineers tried to teach

computers to recognize the world by listing characteristics. For example, if

they want to identify a cat, they will observe and draw typical signs:

triangular ears, beards, and long tails. These characteristics are then

translated into logical conditions in the computer program. When the image

satisfies those conditions, the system concludes that it is a cat.

At

first glance, this approach seems reasonable because it resembles how humans

describe things. However, problems arise when applied to the real world. A cat

may lie curled up so that the tail does not appear in the frame, may turn its

back so that the beard disappears, or be obscured by objects around it. Just a

small detail changes, and the entire rule system can come to the wrong

conclusion.

The

researchers call this phenomenon the infinite variability of the data. In the

real world, no two images are exactly alike. Lighting changes, viewing angles

change, colors change, and every little change can break rigid rules. So

despite the engineers' efforts to add thousands of rules, the system still

can't achieve the flexibility that humans perceive.

AlexNet

2012: Breaking the Limits with Deep Neural Networks

The

turning point came when Geoffrey Hinton's team introduced a system called

AlexNet. Instead of relying on a list of rules written by humans, the system is

built on an artificial neural network. The core idea is to create a structure

of multiple layers of data processing, simulating how neurons in the brain link

together.

In

this model, the image data is included in the form of pixels. Neural networks

don't try to understand the entire image right away. Instead, it analyzes

layer-by-layer. The first layers recognize simple elements such as edges,

lines, or angles. The next layers begin to piece those features together into

more complex shapes such as curves or geometric structures.

As

the data passes through more layers, the neural network gradually recognizes

conceptual features such as eyes, ears, or fur texture. Finally, all the

information is compiled to draw conclusions about the object in the photo. It

is important that these characteristics are not assigned by the programmer but

are discovered by the system itself during the learning process.

Reverse

Propagation Mechanism: The Heart of Evolution

A

neural network can be very complex, but without a learning mechanism it is just

a static mathematical structure. The secret to helping neural networks improve

over time lies in the backpropagation algorithm, also known as backpropagation.

This algorithm allows the system to adjust connections inside the network based

on the error between the prediction and the correct result.

The

learning process begins when the neural network is fed a labeled dataset, such

as millions of images of cats and dogs. The system makes a prediction for each

image, then compares the prediction with the correct answer. If the result is

wrong, a value called the loss function is calculated to measure the degree of

deviation.

This

error signal is then transmitted back through the layers of the network. In the

process, the connection weights between the neurons will be adjusted little by

little. The process is repeated millions of times, causing the neural network

to gradually reduce errors and learn the general patterns of the data. This is

how computers "train" like a learner through experience.

GPU

and Big Data: The two "lungs" that provide power

Although

the idea of neural networks has been around for decades, it wasn't until the

early 2010s that it really took off. One of the important reasons is the

emergence of GPUs, which are graphics processors that are designed to serve the

gaming industry. GPUs are capable of performing thousands of calculations in

parallel, which is very suitable for matrix operations in neural networks.

Thanks

to GPUs, training Deep Learning models becomes feasible in terms of time.

Problems that used to take weeks or months of computation on the CPU can be

reduced to a few days. This improvement opens up the possibility of building

deeper and more complex neural networks.

Parallel

to computing power is the explosion of Internet data. Billions of images shared

online have become a huge source of data for machine learning systems. When

there is enough diverse data, neural networks can learn the true features of

the world instead of just memorizing specific examples. Thanks to the

combination of GPUs and Big Data, Deep Learning has stepped out of the lab and

become the foundation of modern artificial intelligence.

When

computers start "seeing" the world

After

AlexNet's resounding success at the ImageNet Large Scale Visual Recognition

Challenge, the scientific community quickly realized that they were at a major

turning point. For the first time, a computer system can not only process data,

but also learn to extract complex features from images. The error in image

recognition has plummeted, far exceeding all previous computer vision methods.

This

success created a domino effect among artificial intelligence researchers. Labs

around the world are starting to apply deep neural networks to a variety of

problems, from facial recognition to video analysis. Big tech companies are

quick to invest in deep learning, because they realize that the ability to

understand images and sounds will open up countless commercial applications.

In

just a few years, technologies that once existed only in the lab began to

appear in everyday life. Smartphones can recognize faces to unlock, apps can

automatically classify photos, and surveillance systems can detect unusual

behavior. It's all based on the same principle: neural networks learn to

identify patterns from data.

From

computer vision to understanding language and behavior

Once

scientists demonstrate that deep neural networks can understand images, the

next question quickly arises: whether this method can be applied to other forms

of data. The answer is yes. The ideas that developed from Deep Learning quickly

spread to the field of natural language processing, where computers learn to

understand human text and speech.

In

these systems, instead of pixels, the input data is magnetic strings. Neural

networks analyze how words appear together in billions of sentences on the

Internet to learn the structure of a language. Over time, the system begins to

understand more abstract concepts such as context, meaning, and even the

emotional nuances of the sentence.

Thanks

to those advancements, many familiar technologies today become possible. Voice

assistants can understand users' questions, machine translation systems can

switch between multiple languages, and search engines can understand the intent

behind the query. Importantly, all of these capabilities come from the same

foundation: deep learning based on neural networks.

A

philosophical shift in the way artificial intelligence is built

From

the perspective of scientific philosophy, the rise of Deep Learning is not only

a technical advancement but also a change in the way people approach

intelligence. For decades earlier, scientists tried to describe intelligence

using clear logical rules. They believe that if there are enough rules,

computers can simulate human thinking.

But

Deep Learning shows that wisdom can emerge in a different way. Instead of

building knowledge with rigid rules, systems can learn directly from

experience, in the same way that humans learn when observing the world. This

makes artificial intelligence closer to biology, because the learning process

of neural networks has many similarities to the way neural networks in the

brain change as people learn.

This

philosophical shift also explains why ideas that were once seen as overly

simple in the past have become so powerful in the era of big data. When there

is enough data and computing power, machine learning models can discover laws

that are difficult for humans to describe in words.

Widespread

impact in science and industry

After

2012, Deep Learning quickly became one of the fastest-growing fields of study

in computer science. Universities open more training programs in artificial

intelligence, while tech companies pour billions of dollars into research and

applications. From medicine to transportation, from finance to education,

nearly every industry is beginning to explore the possibilities of this

technology.

In

medicine, neural networks are used to analyze X-ray and MRI images to assist

doctors in detecting diseases early. In traffic, computer vision systems help

autonomous vehicles recognize pedestrians and signs. In the field of

e-commerce, deep learning algorithms help analyze user behavior to recommend

suitable products.

It's

worth noting that many of these applications were previously considered too

complex for computers. But as systems learn from millions or billions of

examples, they begin to reach accuracy that equals or even surpasses humans in

certain specific tasks.

Limits

and new questions

While

Deep Learning offers impressive achievements, it also raises many new

questions. One of the biggest challenges is transparency. Deep neural networks

often act as a "black box" where decisions are made based on millions

of parameters that are difficult for humans to explain clearly.

In

addition, machine learning systems rely heavily on data. If the training data

is biased or lacks diversity, the model can learn incorrect conclusions. This

is especially important in sensitive fields such as healthcare, law, or

finance, where algorithm decisions can directly affect humans.

These

challenges have led the scientific community to begin researching new

directions such as artificial intelligence that can be explained, learning less

data, or combining logical knowledge with deep learning. The goal is to build

systems that are not only robust but also reliable.

The

beginning of the modern artificial intelligence era

Looking

back at history, 2012 can be seen as an important transition point in the

development of artificial intelligence. Before that time, many people were

still skeptical about whether computers could truly understand the world. After

the turning point of Deep Learning, the question is no longer "is it

possible", but "how far will it go".

From

image recognition to language understanding, from data analysis to content

creation, neural network-based systems are gradually becoming the foundation of

many modern technologies. These advancements also create the foundation for a

new generation of artificial intelligence capable of interacting with humans in

a more natural way.

So

the story of artificial neural networks is more than just a chapter in the

history of computer science. It marks the moment when machines begin to move

from a state that only executes commands to a state that can learn from the

world. That's when the machine begins to build itself a "worldview"

based on data and experience.