Lesson

3. When Artificial Intelligence Enters the Physical World: Embodied AI

Dr. Son Pham

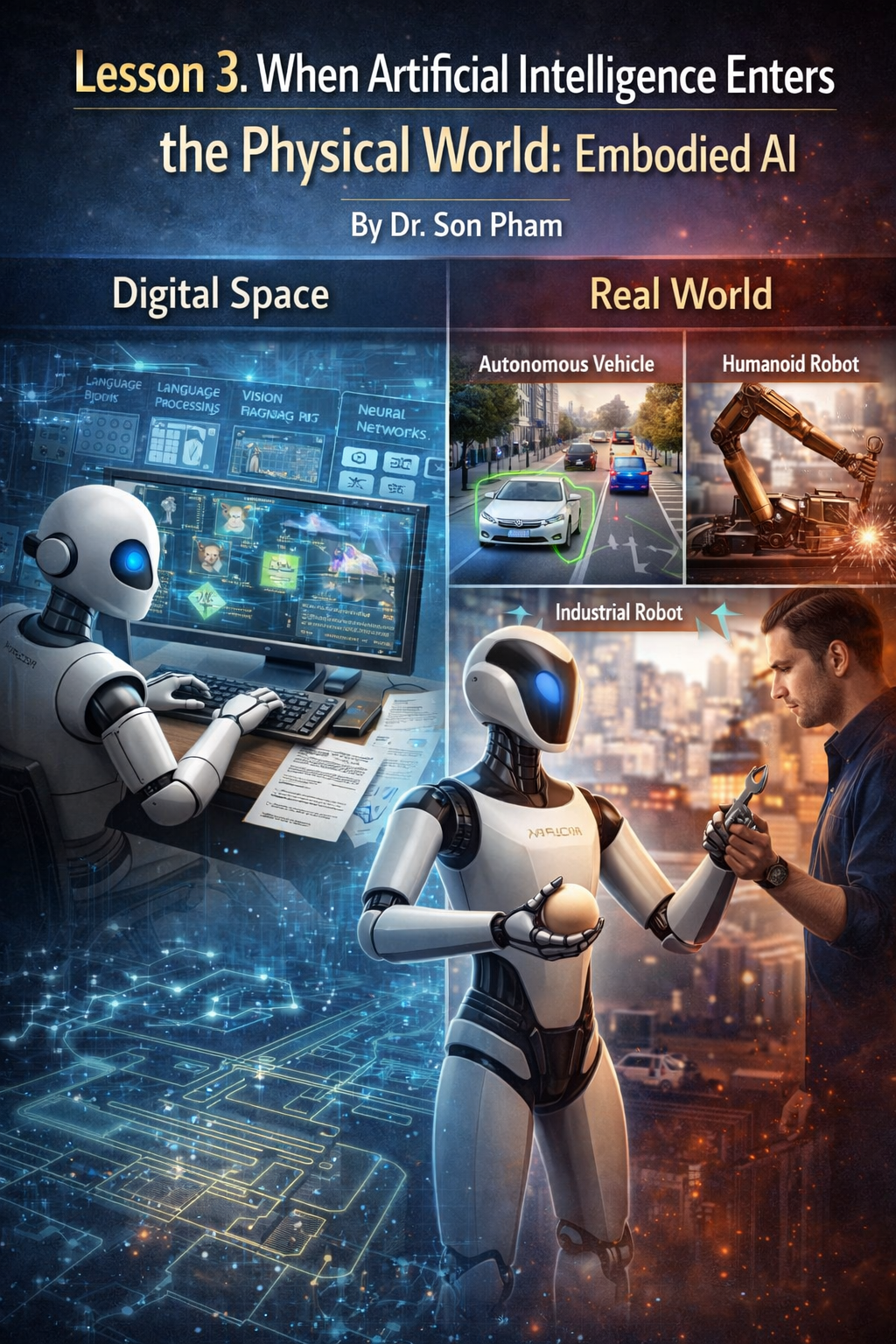

If

the 2012 turning point helped the computer see the world through images, and

2017 helped it understand human language, the next phase of artificial

intelligence is even more ambitious: taking that intelligence out of the

computer screen and putting it in a body that works in the physical world. This

is the field known as Embodied AI, or Embodied AI.

In

previous AI systems, the majority of computer activity took place in the

digital space. A language model can write text, a computer vision system can

recognize images, but it all takes place in a data environment. As AI enters

the physical world, things become much more complicated. At this time,

artificial intelligence needs to understand not only data, but also gravity,

movement, time, and actual risks.

This

transformation is taking place in many fields such as self-driving cars,

industrial robots, and humanoid robots. These systems must not only observe and

analyze, but also make decisions and act. A mistake in the text can be

corrected, but a mistake in the physical world can have serious consequences.

Therefore, Embodied AI is considered the most difficult chapter in the journey

of artificial intelligence development.

Difference

Between Digital Space and Real World

In

the digital space, AI systems operate in a relatively secure environment. If a

language model mispredicts a word or generates a sentence that doesn't make

sense, the user simply deletes it and rewrites it. Mistakes have almost no

physical consequences. This allows researchers to test many new ideas without

worrying too much about risk.

However,

when artificial intelligence enters the real world, every decision can lead to

direct consequences. Imagine a self-driving car driving on the highway. If the

system mistakenly recognizes a sign or brakes at the wrong time, it can be

dangerous for many people. Unlike in a software environment, the physical world

does not have an "undo" button.

In

addition, AI self-determination also has to process data in real time and face

environmental uncertainty. The weather can change, people can act unexpectedly,

and rare situations can always happen. This makes building an AI system that

works safely in the real world one of the biggest technical challenges of our

time.

The

philosophy of "Pure Vision" in autonomous vehicles

In

the field of autonomous vehicles, many technology companies choose to use

sophisticated sensor systems to help vehicles understand their surroundings.

These systems often incorporate various technologies such as cameras, radar,

and laser sensors. Among them, LiDAR is considered one of the most important

tools because it can create very accurate three-dimensional spatial maps.

However,

the Tesla company chose a different direction. Under Elon Musk's leadership,

Tesla pursues a philosophy of "pure vision", that is, building

autonomous vehicle systems that rely mainly on cameras and neural networks.

Their argument is quite simple: the world's transportation system is designed

for humans, and humans drive mostly based on their eyes. So, if it is possible

to build a neural network strong enough to understand images like a human, the

car can also drive safely using only sight.

This

philosophy led to an approach called end-to-end deep learning. Instead of

dividing the system into too many manual processing steps, the image data from

the cameras is fed directly into the neural network. Here, the system learns to

understand the scenery, predict movements, and make driving decisions. This is

a bold approach because it places much of the cognitive responsibility on the

learning capabilities of the neural network.

How

an autonomous car "thinks"

To

understand how autonomous AI works in autonomous vehicles, we can break down

the process into three main steps: awareness, planning, and action. Each step

is supported by neural networks and complex machine learning algorithms.

The

first step is perception. The cameras around the car constantly send images to

the car's central computer. The system uses computer vision models to analyze

each frame and identify important elements such as road surfaces, markings,

other vehicles, pedestrians, and signs. An important technique in this step is

semantic segmentation, which helps the AI distinguish different types of

objects in a scene.

After

understanding the surroundings, the system moves on to the planning step. Here,

the AI calculates various motion scenarios in a very short period of time. For

example, if the car in front of you slows down or a pedestrian is about to

cross the street, the system will quickly evaluate options such as slowing

down, changing lanes, or keeping the same direction. Finally, the action step

sends control commands down to the vehicle's mechanical systems such as the

steering wheel, brakes, and throttle to execute that decision.

Training

with real data and simulations

One

of the biggest challenges of self-determination AI is handling rare situations,

often referred to as edge cases. These situations don't happen often, but

they're important for safety. For example, a car might encounter a person

wearing a strange costume, a bicycle going in the opposite direction, or a

construction site that suddenly appears on the road.

To

solve this problem, Tesla takes advantage of the huge fleet of cars operating

worldwide. Every time a driver has to interfere with the self-driving system,

the data from that situation is sent back to the data center. These examples

become "real-world lessons" that help the model learn how to handle

similar situations in the future.

Besides

real data, companies also use simulated environments to train AI. In these

virtual worlds, the system can experience millions of different scenarios

without risking humans. Generative AI models are also used to create complex

situations such as heavy rain, heavy snow, or chaotic traffic. As a result,

neural networks can "test drive" billions of miles in a simulated

environment before being applied to real life.

From

self-driving cars to humanoid robots

Once

AI systems have learned how to navigate safely in complex environments, the

next step is to extend that ability to other physical tasks. This led to the

development of robots capable of interacting directly with the world around

them. A notable example is the Tesla Optimus humanoid robot.

Unlike

self-driving cars that only need to control the wheels and steering wheel,

humanoid robots have to perform much more sophisticated operations. It needs to

learn to grasp objects, balance when moving, and interact with the environment

just like humans. This requires a combination of computer vision, motion

control, and machine learning.

An

important technique in this process is Reinforcement Learning. In this learning

method, the robot tests a variety of actions and receives a reward for doing it

correctly. Through millions of tests, the system gradually learned how to

adjust grip force, hand angle, and body movement to complete the task. For

example, the robot can learn that holding an egg requires very light force,

while holding a wrench requires more force.

The

future of artificial intelligence

Embodied

AI is considered the next step in the development journey of artificial

intelligence. If language models help AI understand human knowledge, then

embodied systems will allow that intelligence to interact directly with the

physical world. This could open up many applications in manufacturing,

healthcare, logistics, and even in family life.

However,

the road ahead is still challenging. AI systems must achieve a very high level

of safety before they can operate extensively in an environment with humans. In

addition, combining perception, planning, and action in a unified system

remains a complex problem in computer science and robotics.

However,

the development trend is showing a clear direction. Artificial intelligence is

gradually shifting from algorithms that work in computers to entities that can

observe, think, and act in the real world. When that happens, AI will no longer

be just lines of code running on servers, but become a tangible part of the

environment around us.